Data Dive #15: 📉 Duplicate Datasets and the Bottom Line

Recognizing the subtle undertows of duplicate datasets empowers us to refine our BI practices, ensuring that our decision-making tools aren't just razor-sharp but also trustworthy.

We've all been there – navigating the vast seas of our business intelligence (BI) environments and stumbling upon duplicate datasets. On the surface, these might seem like mere redundancies, perhaps even harmless backup measures. But in the intricate realm of BI, these duplicates come with their own set of strings attached, subtly impacting a company's bottom line.

For starters, let's discuss infrastructure. With every additional copy of a dataset, we're allocating more resources to storage. This can escalate costs significantly in cloud-centric businesses, where expenses are tethered to storage and data transfers.

Then, there's the underlying complexity of data management. Duplicate datasets intensify the challenge of maintaining consistent data governance protocols, opening doors to potential inconsistencies and errors. Before we realize it, different departments, armed with their versions of the 'truth,' could make decisions based on diverse cleaning and processing methodologies.

It doesn't stop there. The ripple effect touches our most valuable asset: time. Imagine teams trying to decipher the freshest or most relevant dataset, their cognitive wheels spinning, delaying crucial decisions. These moments of hesitation don't just eat into our productivity but also cast a shadow of doubt over our BI systems. How often have we second-guessed a dataset's accuracy just because there's another similar one lurking around?

Recognizing the subtle undertows of duplicate datasets empowers us to refine our BI practices, ensuring that our decision-making tools aren't just razor-sharp but also trustworthy.

Let's declutter, streamline, and, most importantly, ensure that our data works for us, not against us.

Try a 14 day trial version of our SaaS solution!

Connect in just a few minutes and reference our Docs here if you need.

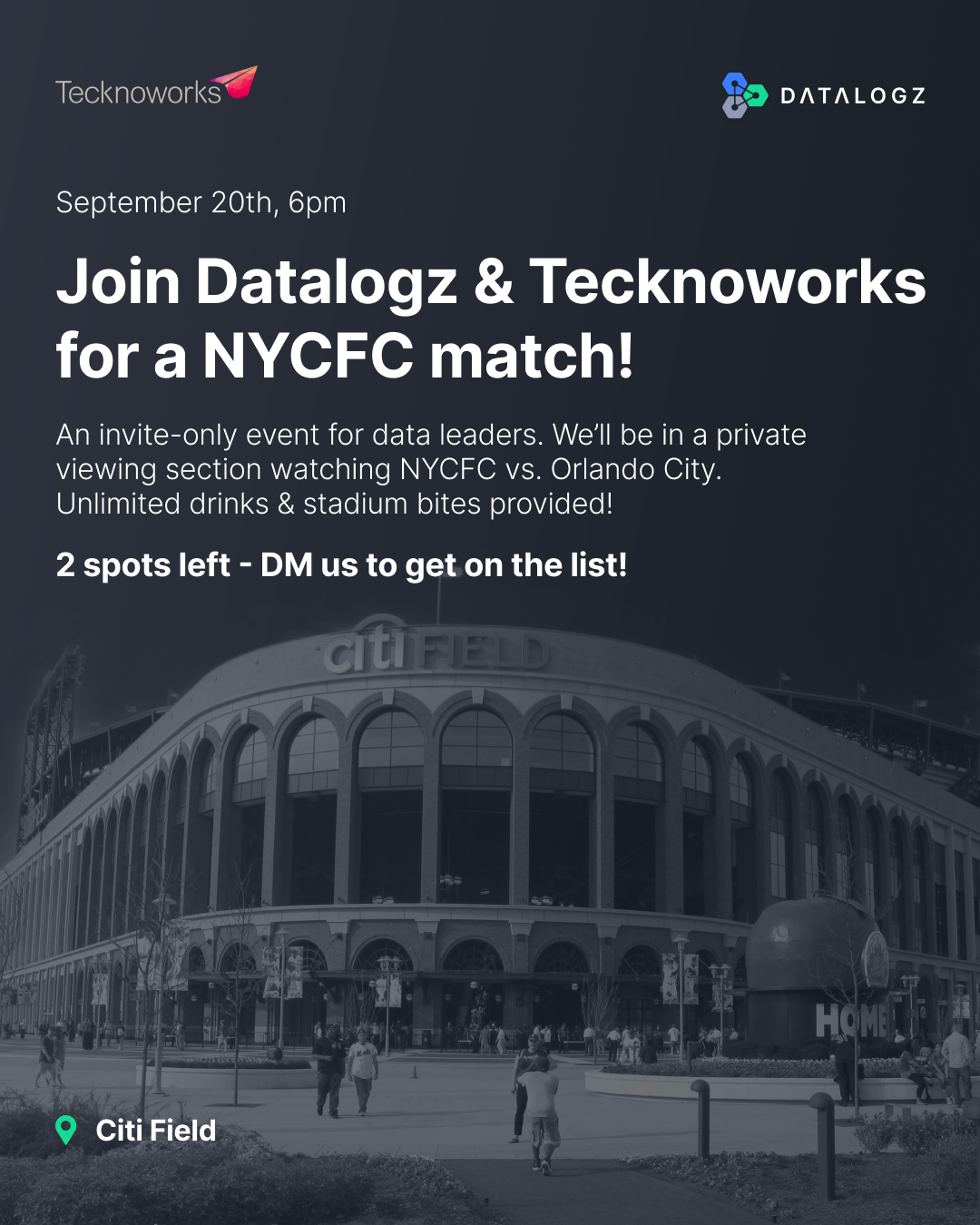

⚽ Are you a soccer fanatic?

Datalogz, in collaboration with Tecknoworks, is hosting an invite-only soccer experience at the Citi Field for data leaders in NYC and beyond.

FIRST COME FIRST SERVE! We had 2 spots open up due to cancellations - feel free to RSVP. If there are still spots left, you will be approved!

🇳🇱 We're heading to the AI & Big Data Expo in the Netherlands!

Datalogz is thrilled to be a part of one of the most prestigious tech events in Europe. Our CEO will be taking the stage to discuss about Navigating the AI Era of Enterprise Analytics.

Whether you're a fellow start-up, an enterprise leader, or just enthusiastic about AI & Big Data, we'd love to connect. Swing by our booth to see how Datalogz can help revolutionize your business intelligence journey!

Frequently Asked Questions

Common questions about this topic, answered.

How do duplicate datasets impact business intelligence costs?

Duplicate datasets increase infrastructure costs through redundant storage allocation, which is especially significant for cloud-based businesses where expenses scale with storage and data transfers. They also create hidden productivity costs as teams waste time determining which dataset is the most current or accurate, delaying critical business decisions.

What are the risks of having duplicate data in a BI environment?

Duplicate datasets create data governance challenges by introducing inconsistencies and errors across the organization. Different departments may end up making decisions based on conflicting versions of the 'truth,' each processed and cleaned using different methodologies. This undermines trust in BI systems and leads to second-guessing data accuracy.

How can I identify and eliminate duplicate datasets in Tableau or Power BI?

BI observability platforms like Datalogz can automatically detect duplicate and redundant content across BI environments including Tableau and Power BI. Datalogz has identified over 1.4 million optimization issues across customer environments, with cost management alerts alone surfacing over $8.2M in avoidable BI spend—much of which stems from duplicate and unused assets.

What is BI sprawl and how does it affect data governance?

BI sprawl refers to the unmanaged proliferation of dashboards, reports, datasets, and data sources across an organization. It complicates data governance by making it difficult to maintain consistent protocols, leading to potential inconsistencies, wasted storage, and eroded trust in analytics. Datalogz currently governs more than 720,000 BI assets across enterprise deployments to help organizations combat this sprawl.

How do duplicate datasets affect team productivity and decision-making?

Teams lose valuable time trying to identify the freshest or most relevant dataset among duplicates, creating cognitive overhead that delays crucial decisions. This hesitation not only reduces productivity but also casts doubt over the reliability of BI systems, causing analysts and decision-makers to second-guess their data sources.